- About

Getting to know us

- Services

- Managed Services

- Application Services

- Cloud Services

- Data Science

- Consulting Services

- Technology Solutions

Managed Services

Application Services

Data Science

Consulting Services

Technology Solutions

- Industries

- Resources

Resources

- Contact

- Contact us

Methods on Tables and Beyond – Auto-generation of code for APEX Applications

Contents

When developing APEX applications, native DML is the obvious way to go as it handles DML operations without having to write custom code. However, in many real-world scenarios, using the native operations doesn't give the ability to manipulate the data as far as may be required. For example, if pressing submit on your APEX screen doesn't just insert/update/delete row(s) in a single table, you might need to use some PL/SQL to achieve what you want.

If you're developing a sizeable app or suite of apps, there will likely be a fair amount of bespoke PL/SQL required to perform certain DML operations. You might even decide to handle all DML operations via custom PL/SQL for consistency. Such an approach allows you greater flexibility, but it also brings an overhead of effort as you need to generate code for each of your tables. Another important consideration with this approach is that you must maintain the in-built security that automated processes provide - e.g. lost update protection.

Methods on Tables

This is where the utility 'Methods on Tables' comes in. This utility allows you to generate PL/SQL packages automatically for your tables.

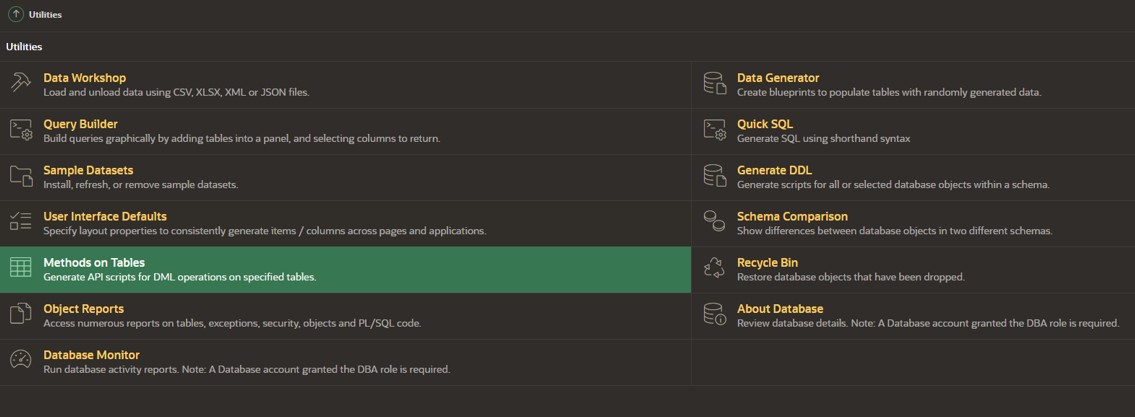

Methods on Tables is found in the utilities in APEX builder.

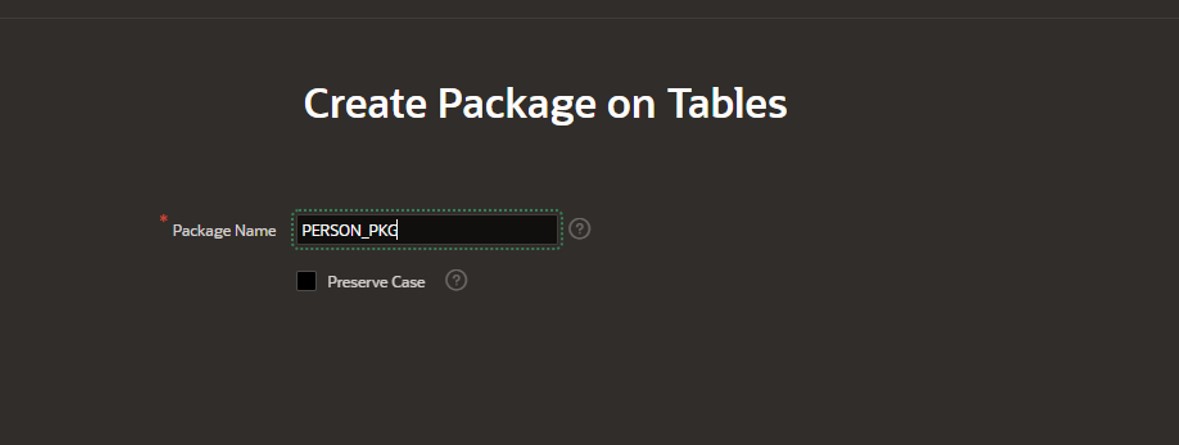

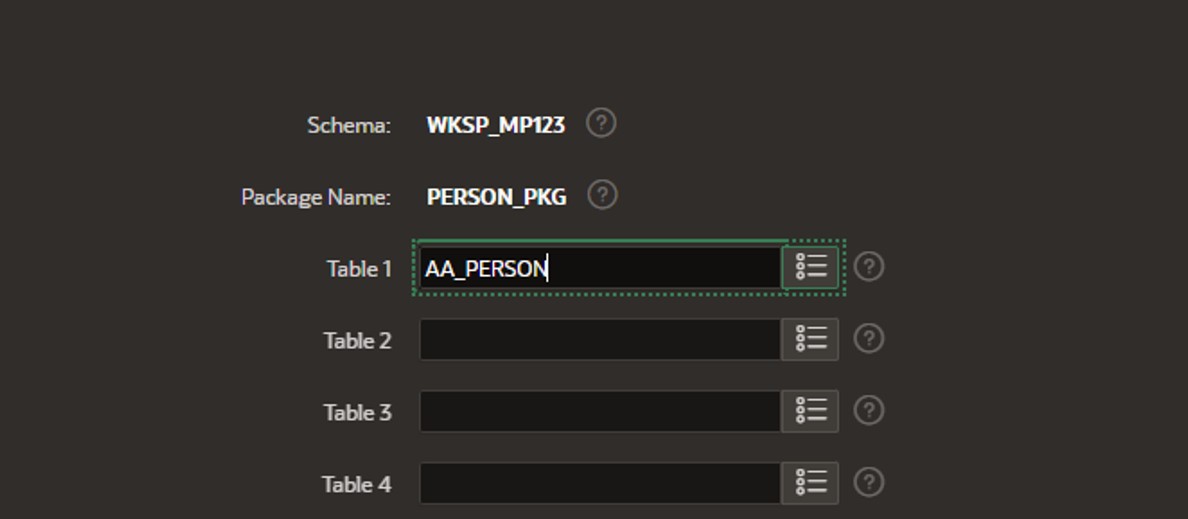

You enter your package name and select the table(s) that you need methods for. As a rule, I would advise against generating a single package for multiple tables, simply from a usability point of view. Having a single package per table provides clarity when maintaining code.

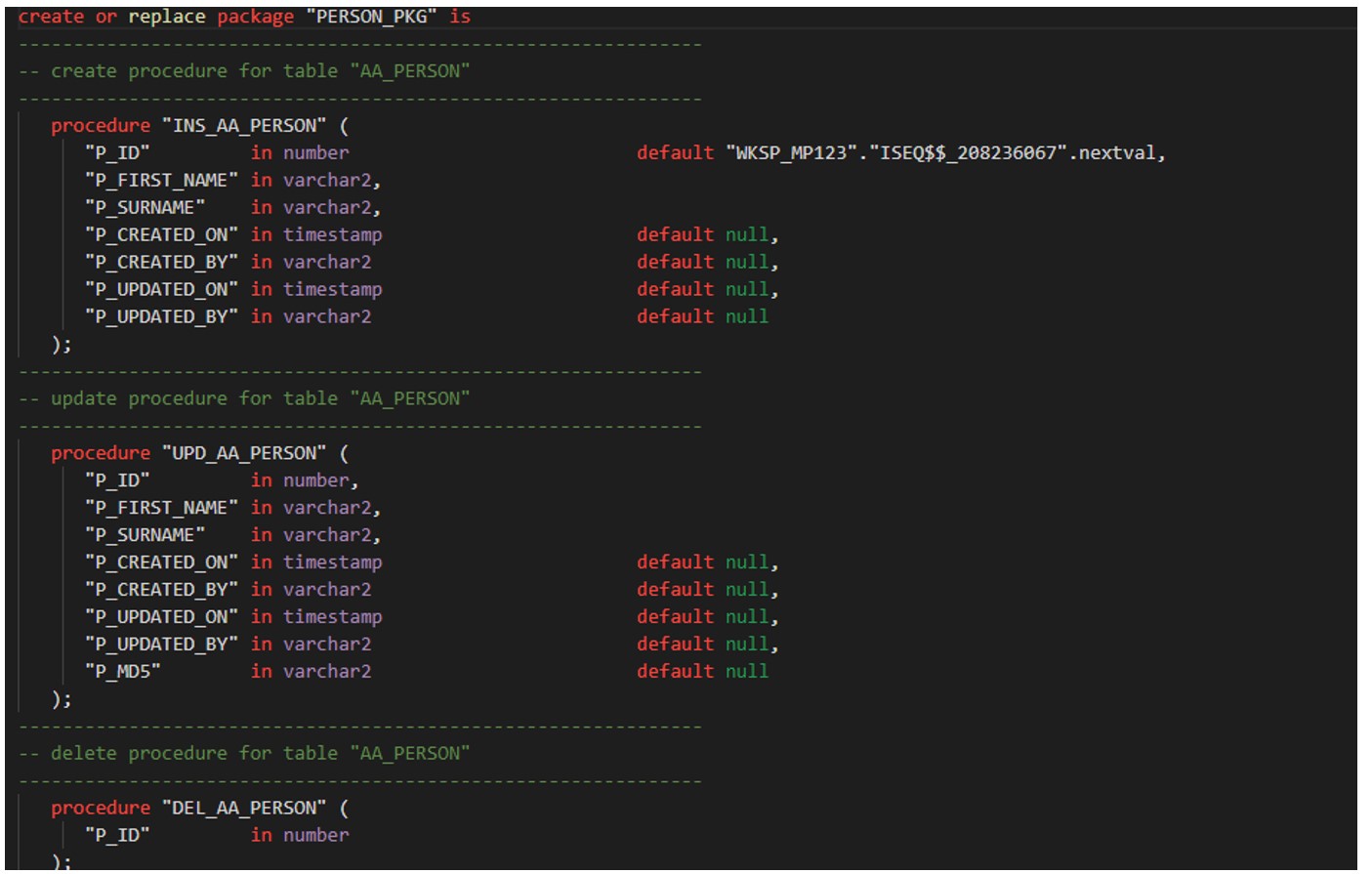

The package is then generated:

Within the update procedure, you can see that we have code that creates a checksum and sends back an error if the record has been updated since the page was loaded.

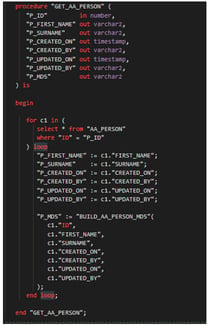

Another procedure generates the initial checksum as part of the 'get' process, used when the page is loaded:

Utilising this method is great for consistency, as all packages will contain the same format for the logic.

For maintainability, don't add bespoke business logic to the generated packages, keep them at their most basic. That way if tables change, you don't risk losing the code you've added since you just regenerate the package. Business logic that utilises your basic table packages can be built as wrappers. For example, if you are creating a user - maybe the person table needs updating as well as the user table in one command. If your table changes, you may need to update your business logic package (e.g. to reference a new column), but your base DML can be regenerated.

AI Possibilities

With the introduction of AI into the world of development (and everywhere else!), the options available to reduce repetitive tasks around code generation are only going to get smarter. I investigated using ChatGPT to write me a function that would allow me to generate tables based on a few parameters - with some standardised audit columns and a related trigger to populate them. It took me a few minutes to put together an, albeit basic, request:

'Please generate a PL/SQL function that returns the PL/SQL code for table/trigger creation, based on a number of parameters: 1 - the table name, 2 - a comma-separated string which contains the required column name and definitions (e.g. surname varchar2(100), first_name varchar2(100)). The procedure should return the code to create the table and it should automatically have the first column named 'ID' which should be a number(10) type. It should also include 4 columns at the end - created_On (date), created_by (varchar2(100)), updated_on (date) and updated_by(varchar2). The function should also return the code for a trigger on the table, that updates the created_on/created_by/updated_on/updated_by columns accordingly whenever a record is inserted or updated.'

And the following code was returned, basic but functional – all within about 5 minutes. I now have the basis of a table for creation for any table within my project.

CREATE OR REPLACE FUNCTION generate_table_and_trigger(

p_table_name IN VARCHAR2,

p_columns IN VARCHAR2

) RETURN VARCHAR2

IS

l_table_code VARCHAR2(4000);

l_trigger_code VARCHAR2(4000);

l_final_code VARCHAR2(4000);

BEGIN

-- Generate the CREATE TABLE statement

l_table_code := 'CREATE TABLE ' || p_table_name || ' (' || CHR(10) ||

' ID NUMBER(10),' || CHR(10) ||

p_columns || ',' || CHR(10) ||

' created_on DATE,' || CHR(10) ||

' created_by VARCHAR2(100),' || CHR(10) ||

' updated_on DATE,' || CHR(10) ||

' updated_by VARCHAR2(100)' || CHR(10) ||

');';

-- Generate the CREATE TRIGGER statement

l_trigger_code := 'CREATE OR REPLACE TRIGGER ' || p_table_name || '_trg' || CHR(10) ||

'BEFORE INSERT OR UPDATE ON ' || p_table_name || CHR(10) ||

'FOR EACH ROW' || CHR(10) ||

'BEGIN' || CHR(10) ||

' IF INSERTING THEN' || CHR(10) ||

' :NEW.created_on := SYSDATE;' || CHR(10) ||

' :NEW.created_by := USER;' || CHR(10) ||

' END IF;' || CHR(10) ||

' :NEW.updated_on := SYSDATE;' || CHR(10) ||

' :NEW.updated_by := USER;' || CHR(10) ||

'END;';

-- Combine table and trigger code

l_final_code := l_table_code || CHR(10) || CHR(10) || l_trigger_code;

RETURN l_final_code;

END;

/

Conclusion

By investing some time at the start of a project in automatic code generation, you can potentially save yourself many hours down the road in terms of PL/SQL code maintenance. Utilising tools such as 'Methods on Tables' can help with this, and the potential to use AI in the area is vast. This, of course, frees up developers to do more interesting things and reduces the time spent on repetitive and boring tasks.

For more information, check out our APEX services, and if you liked this blog, check out our other APEX blogs here.

.png?width=250&name=stonewater-logo%20(1).png)